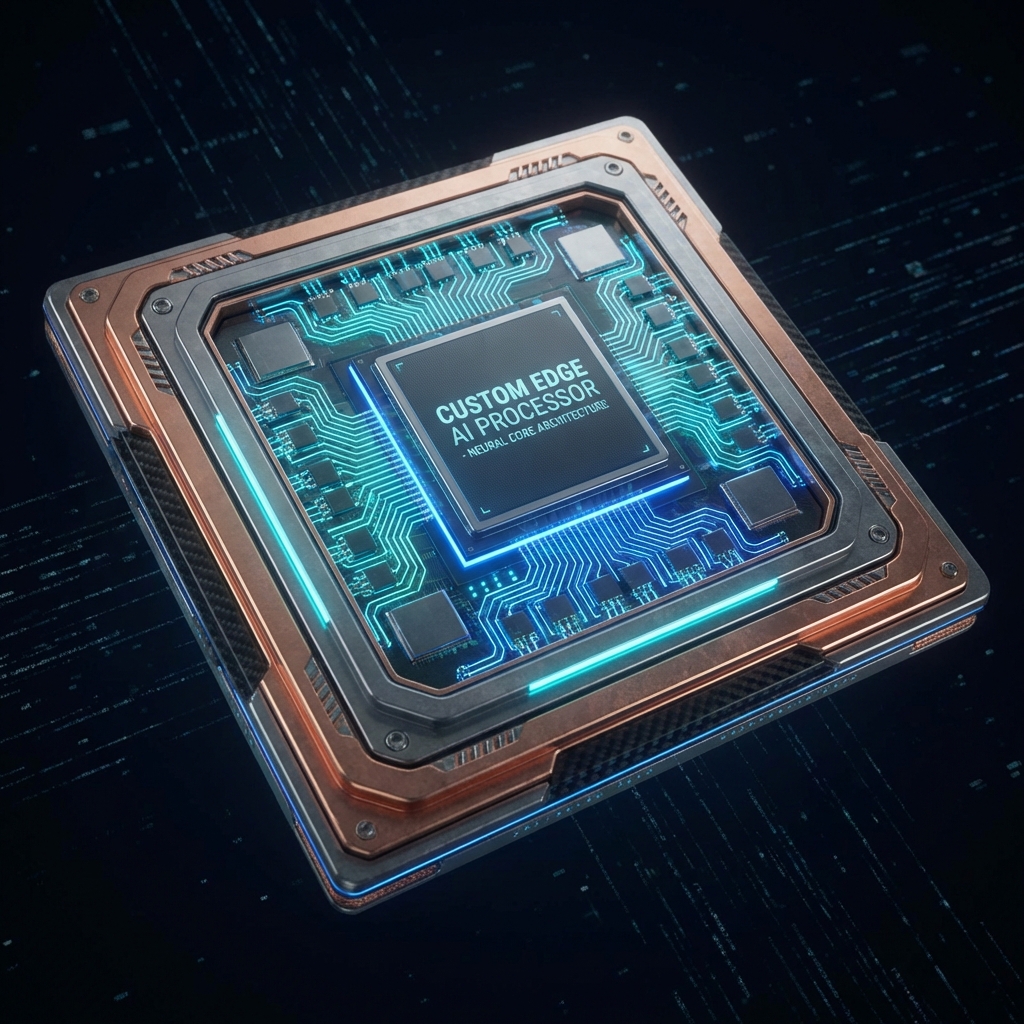

PROPRIETARY SILICON

Built for Speed.

Our custom hardware architecture eliminates the bottlenecks of general-purpose GPUs. By optimizing memory access patterns and computing units for inference, we achieve unprecedented efficiency.

10x

Faster Inference

50%

Less Power

Zero

Latency Issues